How to Evaluate Education Research

Also, an argument: real effects are small

I’m a big believer in evidence-informed teaching. I think research has a lot to offer teachers, and I try to write about ideas that are supported by education research.

In the real world where I receive professional development and have random education consultants tell me what to do, the things I hear as evidence-based are...interesting.

Over time I’ve developed a kind of bullshit detector for evidence. There’s no one thing that can tell you if an idea is or is not evidence-based. But looking across a number of dimensions, teachers can make better-informed decisions based on the evidence we’re shown. This post is a kind of crash course in how to think critically about evidence in education.

Effect Size

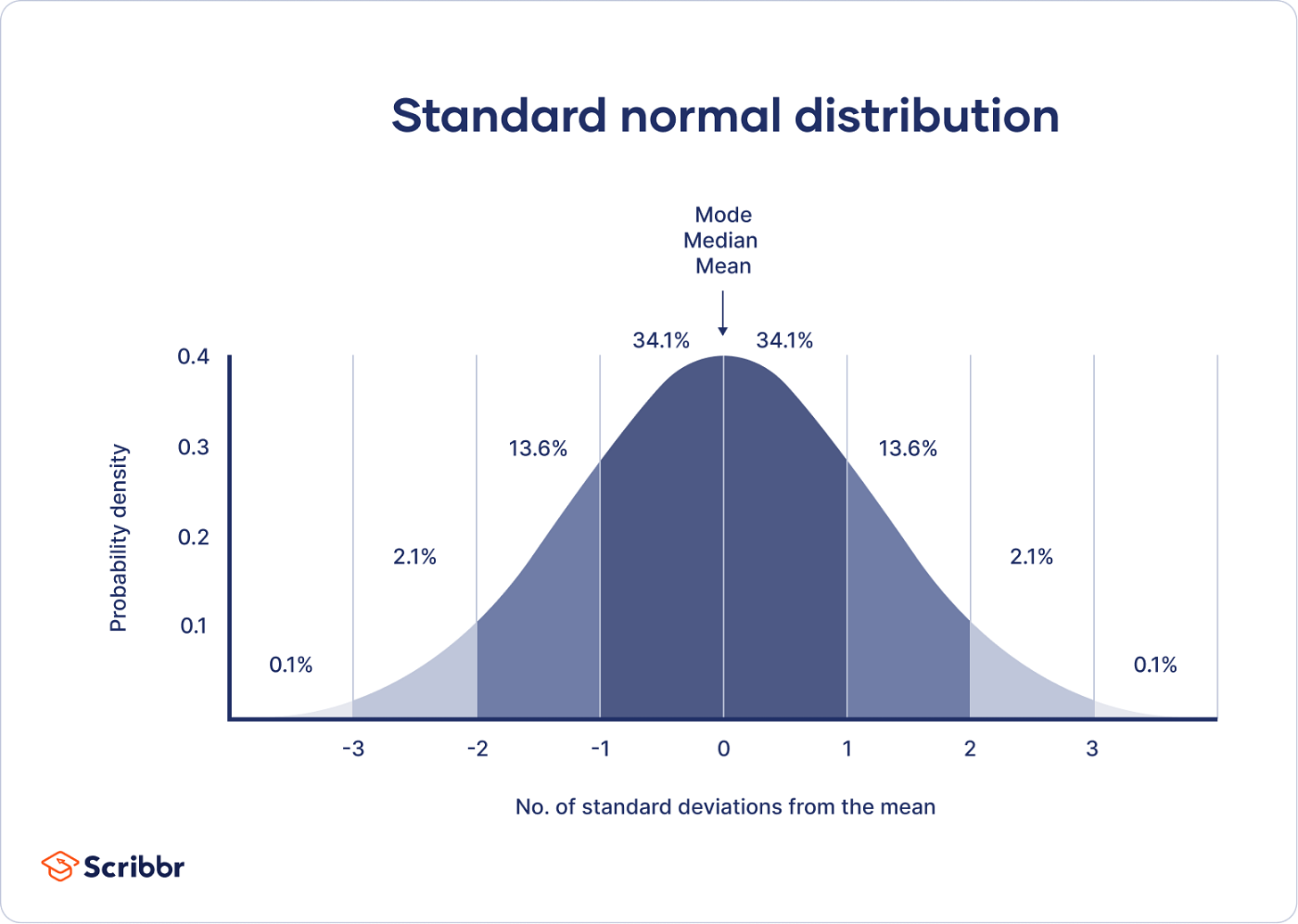

The first thing to understand about research in education is the meaning of an effect size. Below is a normal distribution. The vertical lines represent one standard deviation. At a basic level, an effect size measures the difference between two groups in standard deviations. An effect size of 1.0 means that the difference is approximately one standard deviation — the distance between vertical lines on the graph below. This means a 50% percentile student moves to the 84th percentile, an 84th percentile student moves to the 98th percentile, etc.

A helpful comparison here is to height. American men are, on average, about 5 feet 9 inches tall (175 centimeters) and the standard deviation is about 3 inches (7.6 centimeters). An effect size for height of 1.0 means the effect is, on average, 3 inches (7.6 centimeters). That’s a big difference! The vast majority of effect sizes in education are less than 1. A rough “average effect size” is in the neighborhood of 0.4, which is about 1.2 inches (3 centimeters). Imagine, two groups of adult men. One group is, on average, about 1 inch taller than the other group.

Height and education are different in many ways, but one thing they have in common is that they are the product of lots and lots and lots of little variables. The average adult height is about 4 inches (10 centimeters) taller today than it was a few hundred years ago. Height isn’t some number that’s programmed into us from birth. But there also isn’t one silver bullet that makes humans taller. Humans are taller today because of lots and lots of little changes in our lives. The effect size of any one intervention to make people taller is small, but collectively those effects add up to a large change. That’s a decent mental model for thinking about education.

Ok, let’s talk about research. We’ve got effect sizes. Lots of folks in education wield “research” as a way to reinforce their ideas. What are some things to watch out for? Preview: there are a lot of things to watch out for!

Nonsense

There’s a lot of nonsense out there. In a real PD session last year, a very highly-paid consultant told us that “current research shows student attention span in minutes is their age, plus or minus two.” That’s nonsense. A quick Google search will reveal a wide range of answers depending on a wide range of factors. I don’t know what else to say here. Learning styles, the learning pyramid, and lots more fall into this category. If something “research says” sounds too neat and tidy, do a bit of research. Check the citations. Follow the evidence. It might be nonsense.

Correlation vs Causation

One piece of “research” that I’ve seen multiple times in my professional development is this John Hattie meta-analysis on “collective teacher efficacy.” This collection of studies says that, when teachers believe in their students, those students learn more. Hattie references an effect size of 1.57, which is massive! The catch is, none of these studies are experimental. This is a correlational result. We know that teachers beliefs in students and high levels of student learning are associated, but we don’t know which is causing the other, or if some other factors are causing both. My hypothesis: high levels of student achievement cause teachers to believe in students. If you work in a school where students do well, it’s easy to believe in those students. If you work in a school that’s struggling, it’s much harder to believe in your students. When a result is correlational, it’s hard to figure out what the result means and easy to draw simplistic conclusions.

Correlational research isn’t all useless. There are lots of places in education where we can’t run experiments for practical or ethical reasons, or we rely on quasi-experiments that aren’t fully randomized. Correlation can give us valuable insights or help triangulate other results. But correlation will always be a limited tool, and it’s easy to be misled. Correlation vs causation is probably the most common issue I see in interpreting education research, so I’m going to drop a bunch more examples in a footnote.1

Bias

Another consultant came to my school and told the English teachers that they needed to use the i-Ready digital platform for at least 45 minutes each week. Research showed, they said, that students who used i-Ready for 45 minutes each week made more progress than their peers.

Look, I am so cynical about stuff like this. If you Google the result, you’ll find something on the Curriculum Associates website (the company that produces i-Ready) saying that research supports 45 minutes blah blah blah. The overview starts with, “Curriculum Associates analyzed data…” Let’s just stop there. The company that produced the product analyzed the data for us. How kind of them! It seems like every curriculum commissions some sort of study like this. We should probably just ignore them all. Unless I am 100% convinced a study on a commercial product was done by a disinterested third party, I’m going to assume there’s some bias involved.

Researcher-Designed vs Standardized Measures

I taught in a summer school program as part of my student teaching. During that program, I was required to give the same test at both the start and the end of summer school. My students grew from an average of 31% to an average of 82%. The standard deviation was 15%, meaning my teaching had an effect size of 3.4. 3.4!!! Even higher than collective teacher efficacy! Am I the best teacher in the world? (No.)

The reason my results looked so good is that my teaching was very tightly aligned to the outcome assessment. In this case, I was teaching word problems. The school had me introduce a very structured approach to annotating word problems where students got points for underlining the question, circling key information, etc. When they took the assessment at the beginning of summer school, students didn’t know how to annotate the problems correctly so they got lots of points off even if they knew the math. My student teaching wasn’t a research study, but if it were we would call this a researcher-designed assessment. In general, it’s much easier to show growth on a researcher-designed assessment because the intervention can focus efforts on narrow measures of success. It’s much harder to demonstrate growth on a broader measure like state standardized tests or university entrance exams. Even less reliable measures like grade point average are much harder to influence at scale than a narrow researcher-designed measure.

One more example of this phenomenon: Dr. Jo Boaler ran an 18-day math summer camp focused on mindset and open-ended tasks. The study reported an effect size of 0.52. That’s pretty big! But it wasn’t measuring a standardized result. The assessment was four open-ended tasks from the MARS project. What does that mean for regular school learning? It’s hard to say. It’s not surprising that a program focused on open-ended tasks caused an increase in scores on open-ended tasks.

Cost-Benefit Analysis

Here’s an interesting study. Researchers ran a randomized trial of a growth mindset intervention, so some students received the intervention and others did not. The intervention was short: two 25-minute sessions. The result was an effect size of 0.11 on grade point average.

That’s a small effect size. In a lot of contexts, we might be pretty dismissive of an effect of 0.11. But here, the intervention is tiny and easy to implement. Two 25-minute sessions. That’s nothing. If education could find a dozen different interventions with an effect size of 0.11 that take 50 total minutes to administer, that could add up to a substantive change in outcomes. Let’s compare that to a recent study on Khan Academy. Following Khan Academy’s recommendations for 30 minutes per week of computerized learning resulted in an effect size of 0.08. And that’s for the students who spent 30 minutes on the platform, which was only 10% of the total! As any teacher will tell you, finding time for two 25-minute lessons on growth mindset isn’t too hard. Lots of schools have a homeroom or advisory block where they can squeeze in this intervention. Getting students to spend 30 minutes every week on Khan Academy: surprisingly hard, and has a smaller effect size.

This isn’t a full-on endorsement of growth mindset. There’s a lot of variability in growth mindset results, and the researchers always emphasize that growth mindset interventions are challenging to design. The point here is that, if the research is rigorous and randomized and the cost is small, even small effect sizes can matter.

Comparison Group

Rigorous research makes a comparison. If we want to know how well something works, we need to ask: what is it being compared to? Schools have finite time and resources. Teachers constantly navigate tradeoffs.

The World Bank published a paper last year on an AI education intervention. The end result was a respectable effect size of 0.21 on final exam scores. Here’s the catch: the experimental group received a six-week, twice-a-week, 30-minute after-school intervention using AI. The control group received…nothing. As Michel Pershan writes, they “only proved that their program is better than nothing.”

To give one more simple example of this phenomenon: the SAT is an American university entrance exam. Students often take the test multiple times, and on average do better the second time. I found a few different sets of numbers, but we can ballpark the effect size of taking the exam twice at around 0.15-0.20. That’s a significant effect from literally no intervention at all. Students learn things and get better at stuff all the time. If the study compares the same group of students before and after an intervention, we should expect to see some growth even if the intervention doesn’t do anything at all.

The most rigorous education research compares two serious interventions. If we want to know how well a curriculum works, we compare it to another high-quality curriculum. If we want to know how well tutoring works, we compare it to another plausible use of that same time and resources, maybe small-group instruction or extra teacher planning time. The question isn’t whether something works. Most interventions work! The question is, what is the best use of our time and resources?

Replication

One education research result that just will not die is what’s often called Bloom’s 2-sigma study. Benjamin Bloom wrote a paper summarizing two dissertation studies on one-to-one tutoring. The headline result: an effect size of 2. That’s massive, and has been repeated over and over again as evidence that one-to-one tutoring is the gold standard in education.

There are a bunch of issues with this result, but the one I want to talk about here is simple: when other researchers have tried to replicate it, the results have fallen dramatically. There are tons of studies we could look at. One study looked at a large tutoring program in Nashville. The result? An effect size in the range of 0.04 to 0.09 on literacy test scores. There was no effect on math test scores, or on course grades in either subject.

This doesn’t mean tutoring doesn’t work. Plenty of studies find significant effects. The reality is, there’s a ton of research out there. If you want to say something is research-based, you can probably find a study that you can reference. The most valuable research replicates — it has been repeated in different contexts over and over again, giving us some evidence the result is robust. If a result relies on a single study, we should be suspicious.

Heterogeneity

People love to average things in research. Average results, average effect sizes of a bunch of different studies. Those averages can hide heterogeneity. In a classic meta-analysis by Kluger & Denisi, the researchers found an average effect size of 0.41 for feedback. The catch: huge heterogeneity. One-third of the studies they looked at had a negative effect size: providing feedback decreased performance! The lesson here isn’t about whether feedback is good or bad. It depends!

A few more examples: the growth mindset intervention above showed larger results for lower-achieving students. The effect size of 0.08 for the Khan Academy intervention was limited to the 10% of students who spent the recommended amount of time on the platform. That heterogeneity is important!

Learning is complex. Results depend on all sorts of things. Averaging results together can hide important context.

Lab vs Real World

Many cognitive science phenomena are studied in a lab setting, where researchers can control lots of variables that are hard to control for classroom teachers. Results that are produced in a lab don’t always translate to real classrooms. One example: the interleaving effect is a popular cognitive science result. Interleaving question types (mixing different question types within a study session) typically leads to more durable learning than “blocked” practice where students focus on one question type at a time. That result shows up consistently in lab studies, but has been less consistent in studies conducted in real classrooms. Here is an example of a study in which researchers struggled to replicate the phenomenon in a classroom-based context. This doesn’t mean interleaving is useless. I’m a big fan of interleaving! There is a ton of research on interleaving. Plenty of studies find a positive result in classroom contexts, but those results are often smaller than in a laboratory. These results suggest that interleaving is tricky to get right.

In general, research results are smaller and less consistent in real classrooms. This is common across education research, and we can often learn a lot more from classroom contexts than lab studies.

Months of Learning

If you see someone claim that a teaching practice results in a growth of 4 or 6 or 8 months of learning, you should ignore that number entirely. It’s nonsense. Researchers are typically converting effect sizes into months of learning through some very dubious math. First, no one agrees on what a month of learning is equal to. Sometimes a school year is equal to an effect size of 1, or 0.4, or something else. Second, I hope I’ve convinced you through everything else in this post that effect sizes aren’t that simple. Let’s take the result of my summer school teaching from before: an effect size of 3.4. Did I cause more than three years of additional learning in my three-week summer school class as a student teacher? (No, I did not.) Does taking the SAT a second time result in 4 months of learning? (No.) This is not a serious way to measure learning. We should ignore these claims entirely.

Don’t Average Effect Sizes

Finally, averaging effect sizes. Every time I have been in some sort of PD session and someone brings up John Hattie, I just tune out. Hattie is famous for putting together what’s probably the largest synthesis of education research ever. His website is an interesting reference for meta-analyses of lots of different topics. However, Hattie also distilled his synthesis by publishing an average effect size for over 100 different topics in education research. You can think of this entire post as an argument that averaging effect sizes doesn’t make any sense. Those averages then get taken out of context by people who want to make a particular claim about a particular area of education research. Hattie’s work can be a helpful starting point: if you want to learn about a topic, his website collects a lot of research on lots of different topics. The average effect sizes we should mostly ignore.

In Summary

Teaching is hard. It would be great if there was some magic spell I could cast on my students to make them learn more. Unfortunately, there isn’t. You can maybe tell from my tone in this post that I have a lot of disdain for the ways research is typically used in education. I both believe in the value of evidence-informed teaching, and I mostly see research used in simplistic or opportunistic ways to justify the fad du jour. If we are serious about improving education, that means finding lots and lots of things that make a small difference, and adding up those small differences to make a substantive change in the quality of the education students receive. Finding lots and lots of things that make a small difference means being rigorous in how we interpret evidence.

No research is perfect. The best evidence has been replicated in multiple contexts and demonstrates converging evidence across different types of studies. There are a bunch of studies I’ve mentioned in this post that fail half the criteria or more. Let’s start by ignoring those, and focus on what’s left.

There are two big traps in education research. The first is oversimplification. It’s tempting to pick out some large, flashy effect and claim that this one thing is the answer to all of our problems. It isn’t. Teaching is hard. There’s no one idea coming to save us.

The second trap is dismissing small effects. In reality, all effects are small. Large effects are usually the result of shoddy research. This is tricky! Small effects can be spurious. We need to think rigorously about which small effects are real, which are worth the effort, and what we need to do to execute them effectively. That’s all hard, and that’s the core work of improving teaching and learning.

Very few studies will meet all of the criteria I laid out above. We don’t need to dismiss everything. Serious ideas to improve education come from multiple lines of evidence. That evidence is never going to be perfect. There’s no one silver bullet to improve education, and there’s no one secret thing that guarantees a study is robust and useful for teachers. There are no shortcuts. The goal should be to read broadly, evaluate evidence rigorously, and do the slow, careful work of figuring out what really works.

A few more examples on the correlation vs causation front. This grade inflation study got a lot of attention with a flashy headline, that students of a teacher who inflates grades lose $160,000 of collective lifetime earnings. (The 160k is a misleading way to frame things, but let’s put that aside for now.) This is a correlational study. There is a correlation between having a teacher who grades more leniently and making less money as an adult. Here is a simple, alternative explanation: there is a confounding variable, let’s call it “challenge avoidance.” Students with higher challenge avoidance are more likely to take classes from teachers who grade leniently. In many high schools, there are ways to change one’s schedule and teacher reputations are well established. Challenge-avoidant students switch out of classes with tougher teachers into classes with easier teachers. That same trait, challenge avoidance, leads those same students to make less money as adults because they put less effort into seeking out challenging roles and climbing the ladder in their chosen career. That’s a long explanation, and the reality is probably messier, but this is a helpful thought experiment to understand why correlational research can lead us astray. I’m sure there are lots and lots of variables at play here, and a correlational study can’t account for all of them.

Another hot topic right now is classroom technology. Folks like Dr. Jared Cooney Horvath point out that there is a correlation between the introduction of classroom technology and declining test scores. The issue is, lots of other things changed around the same time. Student social media use and phone use increased, Covid disrupted schooling, schools saw significant demographic change. Maybe classroom technology did play a large role in the decline, but correlational evidence alone should make us cautious.

Finally, something lots of districts, including mine, are talking about right now is attendance. My district made a big deal at the beginning of this year about the fact that low attendance correlated with low achievement. I believe it! But the assumption is that to improve achievement, we need to improve attendance. Maybe the causation goes the other way: maybe students come to school less when they are having a hard time and feel dumb in class. Maybe we need to improve achievement to improve attendance. More likely, it’s a complicated combination of both of those and lots of other factors.

Look, I’m sympathetic to all of these arguments. I think grade inflation is bad. I think schools should radically reduce student screen time. I think attendance is important. There are two lessons here: first, let’s think carefully about claims based on correlational evidence. The causal factors are usually much more complex than correlational results make it seem. Second, correlational evidence typically results in very large effects. Horvath recently wrote about how strong the correlations are between classroom technology use and declining achievement, and shared some compelling graphs. We should take that evidence seriously. At the same time, the claim that large declines in achievement were largely caused by education technology implies that if we remove that technology, we are likely to see a large increase in achievement. I doubt that’s going to happen. All of this is just too complex. Leaning hard on correlational evidence can overstate the potential impact of interventions, and do long-term harm when results don’t meet expectations.

This is great! I thought that after grad school, I wouldn’t have to evaluate research studies anymore. Wrong! The longer I teach, the more I read, and the more research I evaluate in order to improve my pedagogical practice. Recently, I used much of what you’ve outlined here when evaluating Cognitive Mutualism research. I’m bookmarking this so that I can keep referring back to it.

"However, Hattie also distilled his synthesis by publishing an average effect size for over 100 different topics in education research."

After I read Visible Learning, I started to look for criticisms. There were very few. Some of the more persuasive ones were from mathematicians / statisticians / logicians, who said that the way he combined different studies was problematic (among other things). Didau summarizes those here:

https://daviddidau.substack.com/p/visible-learning-invisible-errors